Article

AI Deep Dive Part 2: Data Privacy Concerns

Arete Analysis

Cybersecurity 101

A few weeks ago, Arete’s Threat Intelligence team outlined the history of artificial intelligence (AI). Today, we continue that conversation, exploring data privacy concerns associated with AI tools. AI use cases are often showcased to consumers without warning of potential dangers in their application. When a service is free, your data is often the cost of entry.

Today, we dive into three key elements of data privacy concerns in AI:

What information are you exposing publicly?

What data are you putting into AI applications?

And finally, how are you storing your data?

Operations Security (OPSEC): What information are you exposing publicly?

The public release of information can lead to both positive and negative outcomes. Classification by compilation, in which a series of seemingly harmless pieces of information are pieced together in open source, leading to exposure of proprietary, sensitive information, gives credence to the age-old saying, “Loose lips sink ships.”

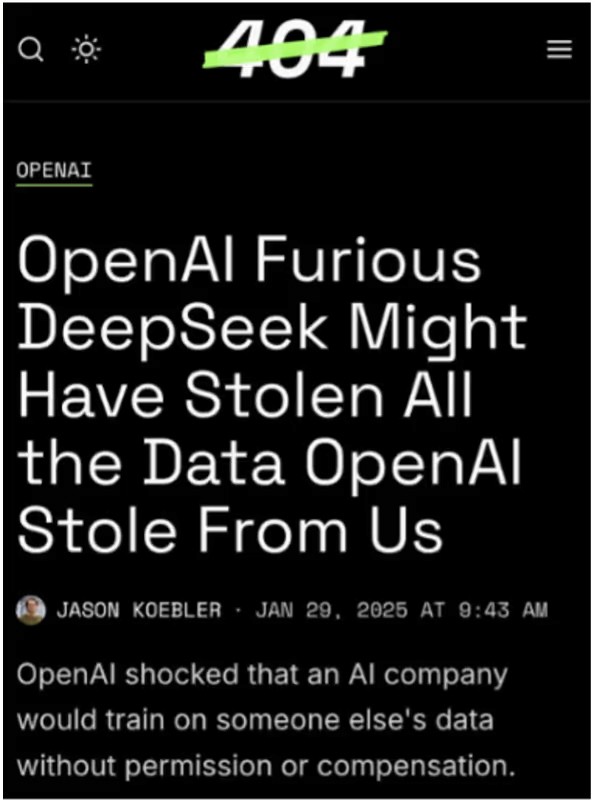

You may be wondering what this has to do with AI. Any information posted publicly can be used by developers to train AI algorithms. This could lead organizations to aid their competitors indirectly, should they choose to use the same AI platforms. An example of this is a 2023 lawsuit filed by artists against a number of companies that own AI image-generating tools. The artists argued that the AI companies used their art to train algorithms without the artists being properly compensated. The court ultimately ruled against the artists, demonstrating that it is extremely difficult to prove what data was used to train AI algorithms.

What data are you putting into AI applications?

As the use of AI continues to expand, users should carefully consider what data they are exposing. When using popular public-facing AI platforms, such as those created by OpenAI, Microsoft, and Amazon, users must be aware of the type of data they input. Sensitive data, including client information, PII, and trade secrets, should not be used to prompt public-facing AI tools. Inputs into these tools are used to further train the algorithm and develop these tools.

How are you storing your data?

When an organization decides to create or collaborate on a new AI model, large amounts of data are required to train it. When considering where to store such data, cloud storage appears as an attractive option. However, it is also important to consider the options and risks associated with data storage.

One example of such risk is the May 2024 data breach suffered by cloud-based data storage company Snowflake.

The threat actor responsible for the breach, UNC5537, subsequently extorted Snowflake, leading to at least $2.7 million in ransom payments for data suppression. This attack was primarily driven by compromised credentials without MFA, demonstrating the need for organizations to not only assess their third-party risk exposure but also continually implement security best practices.

Conclusion

AI is a powerful tool for organizations looking to enable employees to work within their strengths and increase efficiency. However, the improper use of AI can have disastrous effects. It is important for organizations to develop policies and training on the implementation and use of AI to set employees up for success and ensure the security of their environments. Tune in next week for the final installment of Arete’s AI Deep Dive: Understanding Biases & How Threat Actors Use AI.

Sources

Back to Blog Posts

Article

Arete's 2026 Q1 Crimeware Report

Harness Arete’s unique data and expertise on extortion and ransomware to inform your response to the evolving threat landscape.

Article

CMS Vulnerability Leads to ClickFix Campaign

Threat actors compromised at least 700 education and technology websites in a recent ClickFix campaign by exploiting a critical SQL injection flaw (CVE-2026-26980) in the Ghost content management system (CMS). Adversaries combined the vulnerability with the ClickFix social engineering tactic to steal admin keys and inject a malicious JavaScript that delivers a fake Cloudflare or CAPTCHA verification pop-up, tricking victims into copying and pasting a malicious command into their systems.

What’s Notable and Unique

Rather than targeting the end user first, this campaign is unique in its initial exploitation of the system, followed by social engineering attempts. This hybrid attack style is likely being leveraged to bypass traditional defenses.

This recent campaign also highlights how trusted web properties can be weaponized at scale and coupled with unpatched CMS vulnerabilities. Rather than using the CMS compromise to perpetrate a single attack, threat actors turned it into a supply-chain attack that ultimately affected over 700 trusted websites.

Analyst Comments

As network defenders and their tools enhance threat detection capabilities, adversaries increasingly seek methods to bypass these defenses. By combining vulnerability exploitation, social engineering techniques, and staging for ancillary attacks, this campaign successfully bypassed traditional defenses and inflicted significant impact. Defending against hybrid cyberattacks requires comprehensive security controls beyond simply patching vulnerabilities. Organizations should focus on limiting movement within the environment, detecting abuse of trusted applications, and preventing end-user manipulation.

Sources

700+ education and tech websites hijacked in huge ClickFix malware campaign

Under the engineering hood: Why Malwarebytes chose WordPress as its CMS

Think before you Click(Fix): Analyzing the ClickFix social engineering technique

Ghost CMS Vulnerability Exploited to Infect 700 Sites With ClickFix Malware

Article

Threat Actors Leverage Fake JPEG Files for Initial Access

In a recent campaign, researchers observed threat actors using fake JPEG image files as a delivery mechanism to initiate the deployment of additional malicious components. The false JPEG files are typically distributed via phishing emails or other social engineering-based lures, and are actually PowerShell-based malware that deploys a trojanized version of ConnectWise ScreenConnect to establish and maintain persistence in the compromised environment.

What’s Notable and Unique

This campaign leverages JPEG images as the initial lure, where the images are not merely decoys but part of the infection workflow. Victims are typically led to download or open an image that triggers hidden execution logic or redirects them to a payload-delivery sequence that initiates later stages of the intrusion chain.

The attack chain is designed to blend into legitimate environments, making detection more difficult. Execution typically relies on scripted or native Windows components, often including PowerShell or other living-off-the-land binaries, enabling fileless or near-fileless execution and reducing forensic artifacts on disk.

The multistage design ensures that the initial JPEG does not directly contain the full payload but instead triggers retrieval or decryption steps that progressively assemble the final malicious components in memory.

Analyst Comments

This campaign illustrates how threat actors continue to blur the line between legitimate file handling and malicious execution chains, indicating potential overlap with remote management or administrative tooling. The use of JPEG-based staging combined with script-based execution reflects a broader evolution toward a stealth-first intrusion design, in which file formats serve as triggers rather than payload containers.

Sources

OPERATION SILENTCANVAS : JPEG BASED MULTISTAGE POWERSHELL INTRUSION

Podcast

Cyber Risk and Insurance for Law Firms

In this episode of Bytes of Insight, host Vinny Sakore is joined by Laura Zaroski, Managing Director of the Law Firms Group at Gallagher, as they discuss the evolution of cyber risk for law firms. Tune in for firsthand insights on how to select the right cyber policy, the incident response process, and the nuances of ransom payments and sensitive data.